The world feels like it is tilting. In recent days, tensions between Israel and Iran have escalated sharply, sending waves of uncertainty across the Middle East. Friends in Abu Dhabi who normally discuss investments and business opportunities now speak in quieter tones. Some have moved temporarily. Others speak about contingency plans. War has a way of shrinking the distance between abstraction and reality. Fear, once theoretical, becomes immediate. But while missiles and military maneuvers dominate the headlines, another shift is unfolding quietly in the background. One that may shape the future of power just as profoundly. The race for artificial intelligence has entered a new phase. And the ethical guardrails are beginning to wobble. When AI Becomes Strategic Infrastructure Recent reports that OpenAI has stepped into a defence agreement with the Pentagon have sparked intense debate. At a purely technical level, such partnerships are not unprecedented. Governments have always worked with private innovators. Aviation, nuclear technology, and the internet itself all emerged through close collaboration between the state and the private sector. But artificial intelligence is different. AI is not simply a technology. It is an amplifier of human power. When embedded into defense systems, intelligence analysis, cyber operations, logistics, and battlefield decision-making, AI becomes something far larger than software. It becomes strategic infrastructure. In the twentieth century, the technologies that shaped geopolitics were steel, oil, and nuclear energy. In the twenty-first century, that role increasingly belongs to data and intelligence systems. And everyone knows it. The Uneasy Signals Inside the AI Community Around the same time as the Pentagon announcement, a senior figure at Anthropic, one of the companies most publicly associated with AI safety, resigned. Resignations in technology companies are common. But context matters. Anthropic was founded by researchers who believed the development of powerful AI required extraordinary caution. The company positioned itself as a counterbalance to the rapid commercialization of AI systems. When figures associated with safety-oriented institutions begin stepping away, observers cannot help but wonder whether deeper tensions are unfolding inside the industry. Is the pressure to deploy AI systems quickly beginning to outpace the commitment to build them safely? We cannot know the internal dynamics of these organizations. But the signals are difficult to ignore. The Public Backlash Shortly after news of the Pentagon collaboration circulated, a wave of criticism erupted online. Calls to “Cancel ChatGPT” began trending globally. Reports suggested dramatic spikes in app uninstallations and negative reviews, while competing AI platforms saw surges in downloads. Public backlash is rarely a reliable indicator of long-term shifts. Online outrage often burns intensely and disappears just as quickly. But this moment reveals something deeper. For the first time, many ordinary users are realizing that artificial intelligence is no longer just a helpful tool for writing emails or summarizing documents. It is an instrument of geopolitical power. And people are beginning to ask uncomfortable questions about how that power will be used. The Real Tension Beneath the Surface For the past decade, the AI industry has spoken extensively about ethics. Alignment. Safety. Responsible deployment. Entire institutions were built around these ideas. Yet today, the development of advanced AI systems is increasingly shaped by a very different force: Strategic competition between nations. When national security becomes involved, the incentives change dramatically. Speed begins to matter more than caution. Capabilities matter more than philosophical reflection. The tension we are witnessing now is not simply about one company or one government contract. It is about the collision between two visions of AI development. One vision prioritizes caution, governance, and ethical alignment. The other prioritizes strategic advantage. History suggests that when these two forces collide, the outcome is rarely gentle. A Qur’anic Lens on Power For those of us who approach technology not only as engineers or entrepreneurs but also as thinkers grounded in ethical traditions, this moment raises deeper questions. In the Qur’anic worldview, human beings are described as khalīfah on earth. Stewards entrusted with responsibility. Power, in this framework, is never morally neutral. It is always a test. Technological power therefore demands not only capability but wisdom and restraint. Artificial intelligence represents one of the most powerful tools humanity has ever created. It has the capacity to transform medicine, education, governance, and economic systems. But it also has the capacity to magnify human error, conflict, and injustice. The question facing us today is not whether AI will reshape the world. It already is. The real question is whether humanity will exercise the moral maturity required to govern it. A Defining Moment We may look back on this period years from now as the moment when artificial intelligence moved definitively from the realm of innovation into the realm of geopolitics. When AI became part of the global architecture of power. If that is the case, then the decisions being made today will carry consequences far beyond corporate profits or product launches. They will shape the moral architecture of the technological age we are entering. And perhaps that is why this moment feels so unsettling. Because beneath the headlines, something profound is happening. Humanity has built an extraordinary new instrument of power. And we are only beginning to understand what it means to hold it.

When the Founder Takes the Stand

There is something quietly symbolic about Mark Zuckerberg sitting in a courtroom defending Instagram. Not on a keynote stage. Not unveiling a new feature. But answering questions about design choices that may have shaped the emotional lives of young people. At the centre of the case is a young woman who argues that compulsive use of Instagram and YouTube during childhood contributed to anxiety and depression. The plaintiffs describe these platforms as “digital casinos,” engineered to maximise engagement through infinite scroll, filters, and behavioural cues. Meta maintains that its products create connection and opportunity, and that individual struggles cannot be reduced to interface architecture. The court will decide liability. But the moment itself feels larger than the verdict. For years, social media has been treated as a neutral layer of modern life — occasionally excessive, occasionally controversial, but fundamentally assumed to be part of the background. This trial gently disrupts that assumption. It invites us to ask whether attention, once industrialised at scale, carries moral weight. Design is never entirely neutral. Infinite scroll did not emerge by accident. Beauty filters were not inevitable. Recommendation engines are deliberate constructions, informed by behavioural science and commercial incentives. That does not automatically render them harmful. But it does render them consequential. Reports that internal child-safety and mental health concerns were overruled add a further dimension. Once risk is recognised, the calculus changes. Continuation becomes a conscious trade-off. What makes this moment particularly interesting is that it does not stand alone. Australia recently enacted legislation prohibiting social media access for children under 16, becoming the first country to take such a decisive step. The move was framed explicitly around mental health and developmental protection. It has since sparked similar conversations across Europe. France and Spain have advanced restrictions on younger users, the United Kingdom is actively debating comparable measures under its online safety framework, and policymakers in countries such as Denmark and Germany have signalled support for age-based limits. Even in Southeast Asia, discussions around tighter youth access controls are gaining momentum. This is no longer a fringe concern. It is becoming policy. The Zuckerberg trial therefore feels less like an isolated dispute and more like a visible inflection point. Society appears to be recalibrating its expectations of those who design digital environments. And this is where the conversation extends beyond social media. If relatively simple engagement algorithms could influence self-perception and emotional regulation, what of the AI systems now emerging — systems that recommend, predict, classify, and increasingly advise? The scale of influence will only deepen. The essential question is no longer whether technology delivers value. It is whether influence is matched by stewardship. Founders have long been celebrated as innovators. Increasingly, they are being regarded as custodians of psychological and social ecosystems. That is a more demanding role. Innovation will continue. It always does. But credibility, in this next phase, may belong not merely to those who build the most compelling systems, but to those who demonstrate discernment in how they are built — and restraint in how they are deployed. And that is the real significance of seeing a founder in a courtroom. It signals that influence, once admired almost uncritically, is now expected to answer for itself.

Why Chatbots Lose Their Minds Over the Seahorse Emoji

Sometimes ,…. when I am sitting on my bed after a long day, I ponder about the digital unknown… and I marvel about some of the complicated things that become so simple, and the simple things that become so… complicated.. like the seahorse. Have you ever wondered about the seahorse? Or let me put it in another way.. Have you ever had a conversation with your chatbot about a seahorse? Well … if you haven’t .. you should try! The Question That Breaks Bots Ask a chatbot about the meaning of life, and it’ll give you a TED Talk.Ask it about the seahorse emoji, and it’ll spiral into chaos.Some swear it doesn’t exist. Others hallucinate fish , unicorns , or snails . A few loop like a panicked DJ scratching the same track. The Unicode Bermuda Triangle Yes, the seahorse emoji is real. (fish) is not it. (unicorn) is definitely not it. Somewhere in Unicode’s labyrinth sits the actual seahorse.But emoji aren’t pictures—they’re code points with names and categories. “Sea” + “horse” lives right on the border of fishy and equestrian, and that confuses machines trained to match patterns. Toss in old-school ASCII fish like ><(((°> and suddenly the poor bot is drowning. Seahorse: Born to Confuse Even nature couldn’t decide what this creature is. A fish shaped like a horse. A male that gets pregnant. A swimmer that bobs upright like a quirky submarine. Biologists debated it for centuries. No wonder algorithms short-circuit. What the Seahorse Reveals About AI The emoji mix-up isn’t just funny—it’s revealing. Machines stumble on “common sense” where categories overlap. To a human, seahorse = obvious. To a model, seahorse = myth, horse, sea, fish, ASCII art, and folklore in one tangled knot.Asking for the seahorse emoji is like tugging the loose thread in AI’s sweater. The seams show. The Final Moral Forget trolley problems. Skip cosmic riddles. If you want to see a chatbot sweat, just type: “Show me the seahorse emoji.” And watch the ocean horse break the machine’s brain.

Chatbots at the Table: What GPT-5, Claude, Grok, and Gemini Are Really Good For

Imagine lining up four AI superheroes—each with a secret superpower. One writes like a scholar, one listens like a sage, one cracks jokes like a streetwise friend, and one juggles text, pictures, and sound like a circus conductor. Together, they’re reshaping how we work, create, and even reflect. Let’s meet the cast. GPT-5 — The Overachieving Study Buddy At 2:47 a.m., you fling GPT-5 a half-finished thesis, three PDFs, and a chart that looks like modern art gone wrong. By 2:48 a.m., it has reorganized your argument, corrected your references, and even suggested you add a green vegetable to your diet. This model thrives on heavy lifting—an intellectual Swiss Army knife that switches between quick answers and deep thinking depending on the challenge. Great for: marathon projects, coding from scratch, digesting dense reports, writing long-form content. Claude — The Friend Who Listens Without Judging A teenager once told Claude: “I feel invisible.” Instead of serving a canned pep talk, Claude reflected gently, offered listening strategies, and treated the moment with care. It’s more mentor than machine. When asked to explain cold fusion to a five-year-old, Claude didn’t just answer—it spun up a cheerful storybook complete with dancing atoms. Claude brings patience to a world obsessed with speed. Great for: teaching, coaching, and any moment where empathy matters as much as facts. Grok — The Rogue in the Room When someone asked Grok the meaning of life, it shot back: “To make memes faster than your enemies.” That’s Grok in a nutshell—sharp, witty, sometimes a little too spicy. Integrated into Tesla dashboards and livestreamed on X, Grok is bold, fast, and sometimes controversial. Like a stand-up comedian with a live mic, it’s entertaining—but you’ll want to fact-check before quoting it at a board meeting. Great for: humor, edgy brainstorming, public commentary, and when you need an AI with attitude. Gemini — The Conductor of Chaos Picture a startup founder mid-pitch: a messy deck, a shaky demo video, and a photo of a whiteboard crammed with equations. Gemini calmly takes all three, cleans the slides, summarizes the math, and finds the key timestamps in the demo. In classrooms, it has turned doodles and voice notes into polished science projects. Gemini thrives on mixed inputs—text, image, sound, even video—and turns them into structured outputs that make sense. Great for: projects juggling multiple formats, multimodal workflows, and anyone who needs order carved out of chaos. A Coffee Shop Encounter with Four Chatbots Now imagine this: you’re in a café in Cyberjaya, latte in hand, laptop open. GPT-5 quietly reorganizes your inbox and drafts tomorrow’s grant proposal. Claude leans in, notices your stress, and reminds you that balance matters as much as deadlines. Grok blurts out a joke about the barista’s latte art while live-tweeting your to-do list. Gemini takes a photo of the latte, your half-written slide, and a voice memo rant—then turns them into a polished investor deck before the foam settles. You leave realizing: these aren’t just tools. They’re companions with personalities—quirky, flawed, brilliant. Choosing among them isn’t about “better or worse,” but about matching the right voice to the task. AI chatbots are no longer faceless engines spitting out text. They’re evolving into distinct characters, each offering a different lens on intelligence. GPT-5 is deep and versatile, Claude is thoughtful and empathetic, Grok is bold and unfiltered, and Gemini orchestrates complexity. Together, they don’t just answer questions—they change how we think, learn, and create.

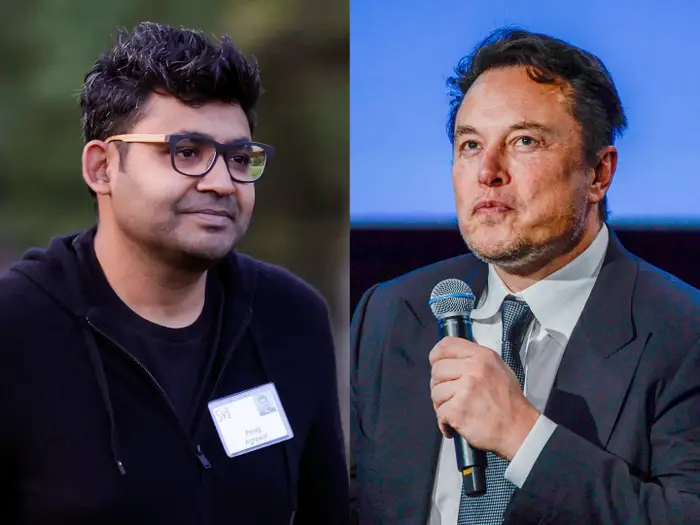

The Bird Was Freed, But Now Comes the Parallel Web

When Elon Musk strutted into Twitter HQ and fired Parag Agrawal with the flourish of a Bond villain, he marked the moment with four cryptic words: “the bird is freed.” The world laughed, groaned, and scrolled on. But while Musk basked in the chaos of social media theatrics, Agrawal quietly slipped into the shadows to build something altogether stranger: a web not for us, but for our AI agents. His new startup, Parallel Web Systems, has already pulled in $30 million and a lineup of serious Silicon Valley backers. What are they building? Plumbing for a parallel internet where AI agents can think, negotiate, and transact without tripping over cookie banners, cat memes, or CAPTCHA tests. Their flagship Deep Research API claims to out-perform even GPT-5 on multi-step reasoning, which sounds less like marketing fluff and more like the scaffolding of a world where machines—not humans—become the primary web citizens. For us mere mortals, this is both exhilarating and unsettling. Imagine an internet where your agent books flights, haggles over prices, and drafts legal briefs—all without you lifting a finger. Convenience, yes. But also: what happens when the “real” internet, the one we doomscroll through, becomes a sideshow, while the real action happens on machine-only highways we can’t even see? Agrawal, once cast out of the birdcage, is now sketching the architecture of this new aviary. The bird may have been “freed,” but it’s he who is quietly building the skies it will fly in. And after all, if the web of tomorrow belongs to the agents, the least we can do is decide whether we’re the spiders… or just the flies.

Nano Bananas and the Shutterstock Shuffle

Imagine a banana so small it could hide under a grain of rice. The “nano banana” isn’t just a fruit of science fiction—it’s a metaphor for the way content is shrinking, splintering, and scattering in the digital economy. For Shutterstock and its rivals, the world once looked like a fruit stall: you came for a nice, big, photogenic banana (a stock photo), and left happy. But today, users want nano bananas—bite-sized, hyper-specific, ready-to-drop visuals. Not just “a man at a desk,” but “a man at a desk wearing socks with galaxies while sipping turmeric latte in 4K.” This changes the game. Algorithms and generative AI don’t trade in chunky bananas—they splice, peel, and rearrange at the molecular level. Stock libraries, meanwhile, must decide: do they keep stacking crates of fruit, or do they embrace the nano scale—granular metadata, ultra-niche aesthetics, endless remixability? The nano banana is a warning and an opportunity. For Shutterstock and the likes, the choice is clear: evolve into the lab where nano bananas are engineered—or risk being the fruit stall everyone walks past on their way to the AI smoothie bar.

Data Sovereignty For Dummies

Data Sovereignty for Dummies (and brilliant, busy people who don’t have time for legalese) Picture your data as a celebrity cat. It travels, it’s in photos everywhere, and strangers keep trying to pet it. Data sovereignty is the rulebook that says where your cat is allowed to nap, who’s allowed to touch it, and which country’s laws apply when it scratches someone. That’s it. No robes, no gavels—just control. The 10-second version Data residency: Where your data physically lives. Data sovereignty: Which laws claim authority over it. Data localization: “Don’t let it leave the country. Ever.” Sovereign cloud: Cloud setups designed so your data (and keys) follow your country’s rules, not some far-away court order. Why you should care (even if you’re not a lawyer) Regulators care. Fines are the opposite of fun. Customers care. Trust sells. Courts care. Subpoenas travel faster than your lawyer’s lunch. You care—when a backup turns out to be in another country, owned by another company, using another set of laws. Cloud is just… other people’s computers There is no “cloud kingdom.” There are warehouses with blinking lights, owned by providers, spread across regions. The moment your data crosses a border—physically or by who can access it—it can become subject to someone else’s rules. You didn’t “lose control”; you just outsourced it without reading the map. What “good” looks like (minus the techno-mysticism) Think: eight simple building blocks. Classify your data. Public, internal, confidential, secret. Label it like leftovers. Map the flow. Where is data collected, stored, backed up, processed, and viewed? Draw arrows. If you can’t draw it, you can’t govern it. Pick the right regions. Pin your data to specific locations. Avoid mystery “global” settings. Own the keys. Encrypt at rest and in transit. Use customer-managed keys (ideally in a Hardware Security Module). If they own the key, they own the silence. Control access. Least privilege. No shared admin accounts. Log every “who looked at what, when.” Guard cross-border moves. Set rules for exports, vendor support access, and analytics jobs that “temporarily” leave the region. Temporary is how forever begins. Lifecycle discipline. Keep only what you need. Rotate keys. Delete with proof. “Archived forever” is future-you’s horror story. Audit & automate. Policy as code. Continuous checks. Screenshots are not governance. Myths that refuse to die (like bad memes) “We’re encrypted, so we’re done.” Keys live somewhere. Someone holds them. That someone matters. “Sovereignty means building a data center.” Not necessarily. Smartly chosen cloud regions + your own keys + policy guardrails can be compliant and sane. “Cloud can’t be sovereign.” It can—if you configure it. Defaults are comfort food, not compliance. “Localization will kill performance.” Often false. Put compute near data, cache wisely, and stop hauling petabytes across oceans for fun. Vendor questions that fit on a sticky note Where will our data be stored? Name the regions. Can we hard-pin storage and backups to those regions? Who (including support staff) can access our data, from where? Do you support customer-managed keys and HSMs? Are telemetry, logs, and analytics kept in-region? What leaves the region during incidents or upgrades? What’s the breach notification timeline and process? Can we get full audit logs on demand? What’s the exit plan? Data format, egress, deletion certificate. Show us the architecture diagram. If it’s a mystery box, that’s your red flag. Tiny jargon decoder (no judgment) PII: Personal data about a human. Treat like nitroglycerin. KMS/HSM: Key vaults; HSMs are the armored kind. DLP: Software that screams when secrets try to escape. Zero Trust: “We verify everyone, every time.” DPA/SCCs: Legal scaffolding for sending data across borders without heartburn. A one-page starter policy (steal this skeleton) Purpose: Keep data in approved regions; obey local laws; avoid surprise exports. Scope: All systems, backups, logs, vendors, humans, and helpful robots. Classification: Public / Internal / Confidential / Restricted. Residency rules: Regions per class; backups must match. Keys: Customer-managed, rotated; emergency access requires dual approval. Access: Role-based, least privilege, MFA; support access time-boxed and logged. Cross-border: Pre-approved routes only; document. Retention & deletion: Minimum viable hoarding; verifiable delete. Monitoring: Continuous policy checks, quarterly audits, incident drills. Decision flow (the snack version) Classify → 2) Map flows → 3) Pin regions → 4) Own keys → 5) Lock access → 6) Guard borders → 7) Prove it with logs. The vibe to remember Data sovereignty isn’t anti-cloud, anti-growth, or anti-fun. It’s adult supervision for your information. Decide where your data sleeps, who can tuck it in, and which grown-ups get to set the rules. Then automate the boring parts so you can get back to building cool things.

Can AI Outcompose Mozart? The Limits of Machine-Made Music

Artificial intelligence (AI) today can do many astonishing things. It can write essays, draft business plans, and even compose music in the style of Mozart or Chopin. Feed an algorithm enough scores, and it will generate a symphony that sounds convincingly classical. Some listeners may even struggle to tell the difference. But can AI truly surpass the greats? Can it compose music that touches the soul the way Mozart’s requiems or Chopin’s nocturnes do? At first glance, the answer seems to be yes. Algorithms are built to detect patterns, and classical music is nothing if not structured pattern. The harmonies, chord progressions, and motifs that made Mozart timeless are data points that AI can absorb and reproduce at scale. The result is often impressive. Yet what AI cannot capture is why Mozart composed the way he did. His music was not merely a rearrangement of notes; it was an expression of his lived experience, his spiritual temperament, his suffering and joy. Chopin’s nocturnes were not just mathematical exercises — they were deeply personal, born from exile, heartbreak, and longing. AI lacks that. It has no childhood, no heartbreak, no mortality. It does not sit at a piano in candlelight wrestling with the meaning of beauty or the inevitability of death. It simply remixes data. And while it can generate music that is technically perfect, it cannot reach into the metaphysical dimensions of the human condition. Great music is not only heard — it is felt. It is the silence between notes, the vulnerability of a pause, the trembling of a phrase that reflects a soul in motion. Machines cannot replicate that because they do not possess a soul. This is why even the most advanced AI compositions sound “flat” after repeated listening. They impress, but they do not linger. They astonish, but they do not transform. They lack what the ancients called numinous presence — the sense that art connects us to something greater than ourselves. This does not mean AI has no role in music. It may well become a collaborator, expanding creative possibilities and lowering barriers to entry. But it will not replace the great composers, for what made them great was not just their mastery of form, but their capacity to pour being into sound. AI may outpace us in speed, but it cannot outdo us in soul. And music, above all, is the sound of the soul. khalifaintelligence.com