The world feels like it is tilting.

In recent days, tensions between Israel and Iran have escalated sharply, sending waves of uncertainty across the Middle East. Friends in Abu Dhabi who normally discuss investments and business opportunities now speak in quieter tones. Some have moved temporarily. Others speak about contingency plans.

War has a way of shrinking the distance between abstraction and reality.

Fear, once theoretical, becomes immediate.

But while missiles and military maneuvers dominate the headlines, another shift is unfolding quietly in the background. One that may shape the future of power just as profoundly.

The race for artificial intelligence has entered a new phase.

And the ethical guardrails are beginning to wobble.

When AI Becomes Strategic Infrastructure

Recent reports that OpenAI has stepped into a defence agreement with the Pentagon have sparked intense debate.

At a purely technical level, such partnerships are not unprecedented. Governments have always worked with private innovators. Aviation, nuclear technology, and the internet itself all emerged through close collaboration between the state and the private sector.

But artificial intelligence is different.

AI is not simply a technology.

It is an amplifier of human power.

When embedded into defense systems, intelligence analysis, cyber operations, logistics, and battlefield decision-making, AI becomes something far larger than software.

It becomes strategic infrastructure.

In the twentieth century, the technologies that shaped geopolitics were steel, oil, and nuclear energy.

In the twenty-first century, that role increasingly belongs to data and intelligence systems.

And everyone knows it.

The Uneasy Signals Inside the AI Community

Around the same time as the Pentagon announcement, a senior figure at Anthropic, one of the companies most publicly associated with AI safety, resigned.

Resignations in technology companies are common. But context matters.

Anthropic was founded by researchers who believed the development of powerful AI required extraordinary caution. The company positioned itself as a counterbalance to the rapid commercialization of AI systems.

When figures associated with safety-oriented institutions begin stepping away, observers cannot help but wonder whether deeper tensions are unfolding inside the industry.

Is the pressure to deploy AI systems quickly beginning to outpace the commitment to build them safely?

We cannot know the internal dynamics of these organizations.

But the signals are difficult to ignore.

The Public Backlash

Shortly after news of the Pentagon collaboration circulated, a wave of criticism erupted online.

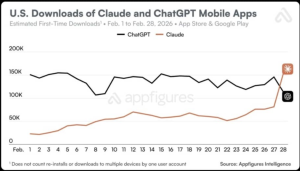

Calls to “Cancel ChatGPT” began trending globally. Reports suggested dramatic spikes in app uninstallations and negative reviews, while competing AI platforms saw surges in downloads.

Public backlash is rarely a reliable indicator of long-term shifts. Online outrage often burns intensely and disappears just as quickly.

But this moment reveals something deeper.

For the first time, many ordinary users are realizing that artificial intelligence is no longer just a helpful tool for writing emails or summarizing documents.

It is an instrument of geopolitical power.

And people are beginning to ask uncomfortable questions about how that power will be used.

The Real Tension Beneath the Surface

For the past decade, the AI industry has spoken extensively about ethics.

Alignment.

Safety.

Responsible deployment.

Entire institutions were built around these ideas.

Yet today, the development of advanced AI systems is increasingly shaped by a very different force:

Strategic competition between nations.

When national security becomes involved, the incentives change dramatically.

Speed begins to matter more than caution.

Capabilities matter more than philosophical reflection.

The tension we are witnessing now is not simply about one company or one government contract.

It is about the collision between two visions of AI development.

One vision prioritizes caution, governance, and ethical alignment.

The other prioritizes strategic advantage.

History suggests that when these two forces collide, the outcome is rarely gentle.

A Qur’anic Lens on Power

For those of us who approach technology not only as engineers or entrepreneurs but also as thinkers grounded in ethical traditions, this moment raises deeper questions.

In the Qur’anic worldview, human beings are described as khalīfah on earth.

Stewards entrusted with responsibility.

Power, in this framework, is never morally neutral.

It is always a test.

Technological power therefore demands not only capability but wisdom and restraint.

Artificial intelligence represents one of the most powerful tools humanity has ever created. It has the capacity to transform medicine, education, governance, and economic systems.

But it also has the capacity to magnify human error, conflict, and injustice.

The question facing us today is not whether AI will reshape the world.

It already is.

The real question is whether humanity will exercise the moral maturity required to govern it.

A Defining Moment

We may look back on this period years from now as the moment when artificial intelligence moved definitively from the realm of innovation into the realm of geopolitics.

When AI became part of the global architecture of power.

If that is the case, then the decisions being made today will carry consequences far beyond corporate profits or product launches.

They will shape the moral architecture of the technological age we are entering.

And perhaps that is why this moment feels so unsettling. Because beneath the headlines, something profound is happening.

Humanity has built an extraordinary new instrument of power. And we are only beginning to understand what it means to hold it.

1 Comment

Your comment is awaiting moderation.

hello world

hello world

Your comment is awaiting moderation.

https://shorturl.fm/D0ugG

Your comment is awaiting moderation.

https://shorturl.fm/UCIhn

Your comment is awaiting moderation.

https://shorturl.fm/c7iav

Your comment is awaiting moderation.

Drive sales and watch your affiliate earnings soar!

Your comment is awaiting moderation.

Start earning on every sale—become our affiliate partner today!

Boycott Palantir!